How Data Teams Build Skills for Agentic Analytics: 7 Practical Steps

A practical 7-step guide for data teams to design, organize, and test skills that improve analytics agent reliability and chat-with-data outcomes.

27 February 2026

By ClaireCo-founder & CEOMost data teams start with prompts, then hit the same wall: answers sound plausible, but quality is inconsistent.

If you are building an analytics agent, a better approach is to treat reusable instructions as a system component. Skills are that component.

This guide shows how data teams can build and operate skills for agentic analytics in an open source workflow, especially when your stack includes dbt, a modern data stack, and business users who need to chat with data daily.

Why create skills

Without skills, your analytics agent behaves like one generalist trying to answer every business question with the same logic.

That creates three recurring issues for data teams:

- Inconsistent metric interpretation across domains,

- Low trust from business teams when answers vary by prompt wording,

- Slow iteration because every fix is global instead of domain-specific,

Skills solve this by turning domain logic into reusable context blocks. Instead of one generic behavior, you get specialized behavior by domain, with clear rules and measurable performance.

Skills also make intent explicit at query time. Users can call a specific skill directly to tell the agent which business domain the analysis belongs to. That reduces ambiguity early and helps the agent pick the right definitions faster.

What a skill should do in an analytics agent

A skill is not just "better prompting." A skill should reduce ambiguity in core workflows:

- Which sources are trusted and in what order,

- How to scope SQL work by model layer and business domain,

- How to apply context engineering rules before query generation,

- How to produce outputs that analysts can validate quickly,

In practice, this improves reliability faster than model switching.

7 practical steps to build skills for data teams

1. Start from recurring failure patterns

Pull the last 20 to 50 failed analytics agent interactions.

Group them by failure type:

- Wrong metric definition,

- Wrong table grain,

- Missing business rule,

- Weak output format for stakeholder handoff,

Each recurring pattern should map to one skill objective.

2. Define one workflow per skill

Avoid generic skills like "be better at SQL."

Use narrow scopes instead:

- Marketing performance analysis,

- Finance KPI analysis,

- Product funnel analysis,

- Sales pipeline analysis,

Narrow skills are easier to test and easier to trust.

3. Encode context engineering constraints

Your skill should define context priority explicitly:

- Use metric and semantic definitions first,

- Use dbt docs and lineage second,

- Use warehouse metadata third,

- Ask clarifying questions before guessing,

This is the difference between random retrieval and applied context engineering.

4. Add strict SQL and analysis rules

For analytics work, include guardrails that prevent unsafe shortcuts:

- Define grain before aggregation,

- Validate time windows and timezone assumptions,

- Prefer documented marts over raw layers,

- Return assumptions and checks with every answer,

If your team uses dbt, include model-layer expectations directly in the skill.

5. Add output contracts for business users

When teams chat with data, output structure matters as much as SQL quality.

A strong skill can enforce:

- Decision summary first,

- SQL and metric logic second,

- Validation notes third,

- Next actions last,

This keeps answers usable across technical and non-technical audiences.

6. Build skills by data domain (sub-agent pattern)

The most effective pattern is domain-specific skills:

- Marketing analytics skill,

- Finance analytics skill,

- Product analytics skill,

- Sales analytics skill,

Each domain skill can behave like a focused sub-agent with its own definitions, KPIs, and rules.

You can orchestrate this in RULES.md by declaring which domain skills are available and when to use each one. In practice, this acts like lightweight orchestration: the agent routes to the right domain behavior instead of applying one global instruction set.

7. Version and test every skill change

Treat skill updates like code changes:

- Version in git,

- Review in pull requests,

- Keep a before/after benchmark diff,

- Assign a clear owner per domain skill,

This aligns skill operations with the rest of your open source and data stack workflow.

How to build and test skills in nao

In nao, you can provide skills as part of your agent context so the assistant can read and apply them during execution.

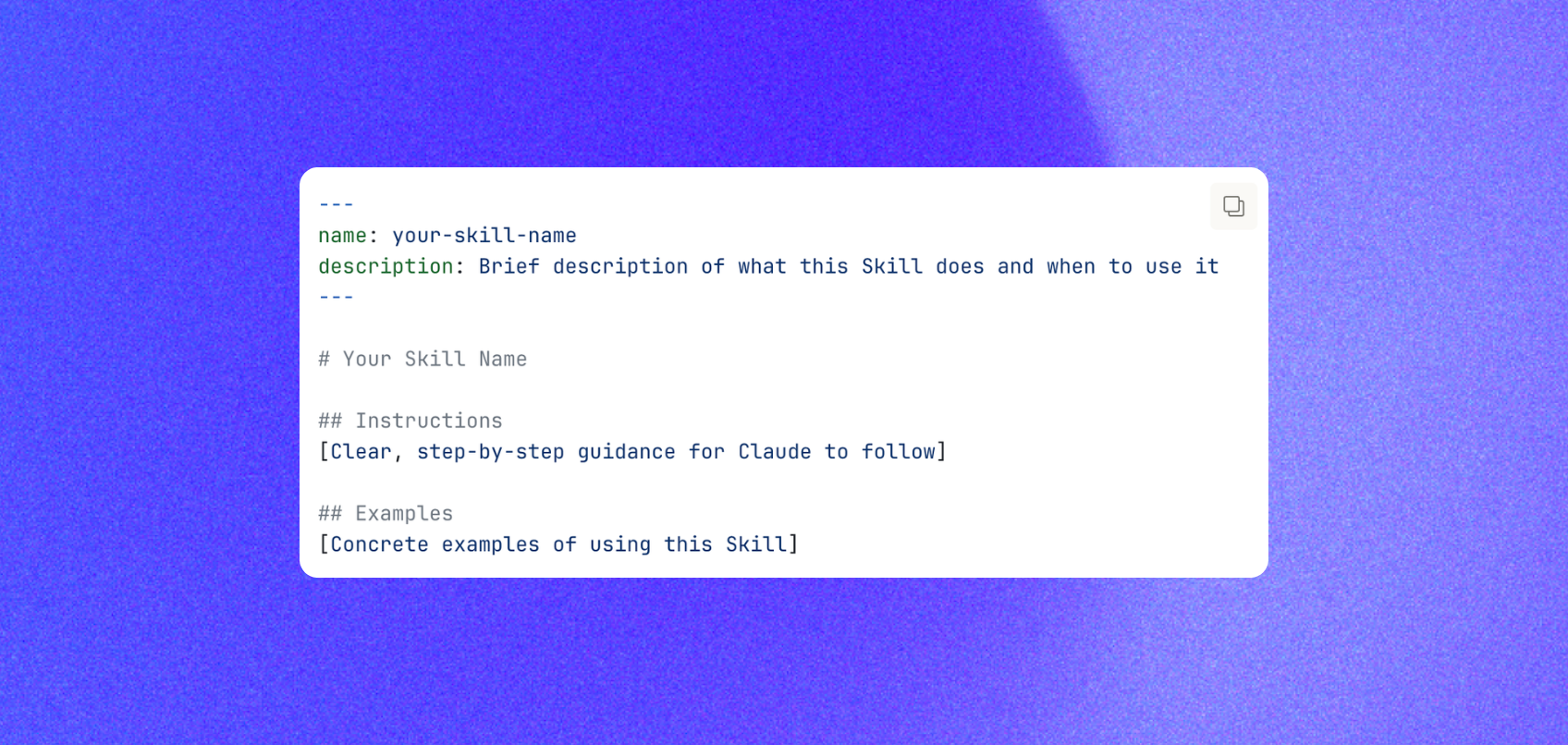

Expected skill format (from nao docs) is a markdown file with:

Suggested workflow:

- Create or update skill files for each domain,

- Register availability and routing guidance in

RULES.md, - Run

nao testto measure impact on answer quality, cost, and speed, - Compare results against your previous baseline before rollout,

Relevant docs:

- Skills capability: nao docs - skills,

- Evaluation framework: nao docs - nao test,

This turns skills from static instructions into measurable infrastructure for agentic analytics.

Common mistakes to avoid

- Building one giant skill for every workflow,

- Skipping benchmark-based evaluation,

- Letting the agent access all sources without a priority model,

- Returning answers without assumptions and validation checks,

- Treating context engineering as optional,

If your goal is reliable chat with data, these mistakes create hidden risk fast.

Final takeaway

For data teams, the path to better analytics agents is not more prompt experimentation.

It is domain-specific skills, clear context engineering rules, and measurable iteration with nao test.

When done well, everyone can chat with data using the same definitions, the same guardrails, and the same operational logic.

Claire

For nao team

Frequently Asked Questions

Related articles

product updates

We're launching the first Open Source Analytics Agent Builder

We're open sourcing nao — an analytics agent framework built on context engineering. Here's our vision for what comes after black-box BI.

Community

Agentic Analytics Meetup Paris 🇫🇷

Recap of the first meetup dedicated to agentic analytics: 4 data teams from Gorgias, Malt, GetAround, and The Working Company share what they've actually built, what worked, and what didn't.

Technical Guide

How to Do Data Modeling for AI Agents: 8 Practical Rules

A practical guide to data modeling for AI agents, with clear rules to optimize for precision, reliability, and better chat-with-data performance.