How to Build Production-Ready AI Agents for Data Analytics: The Complete Guide

A complete guide to building reliable AI agents for data analytics - from context engineering to production deployment

4 February 2026

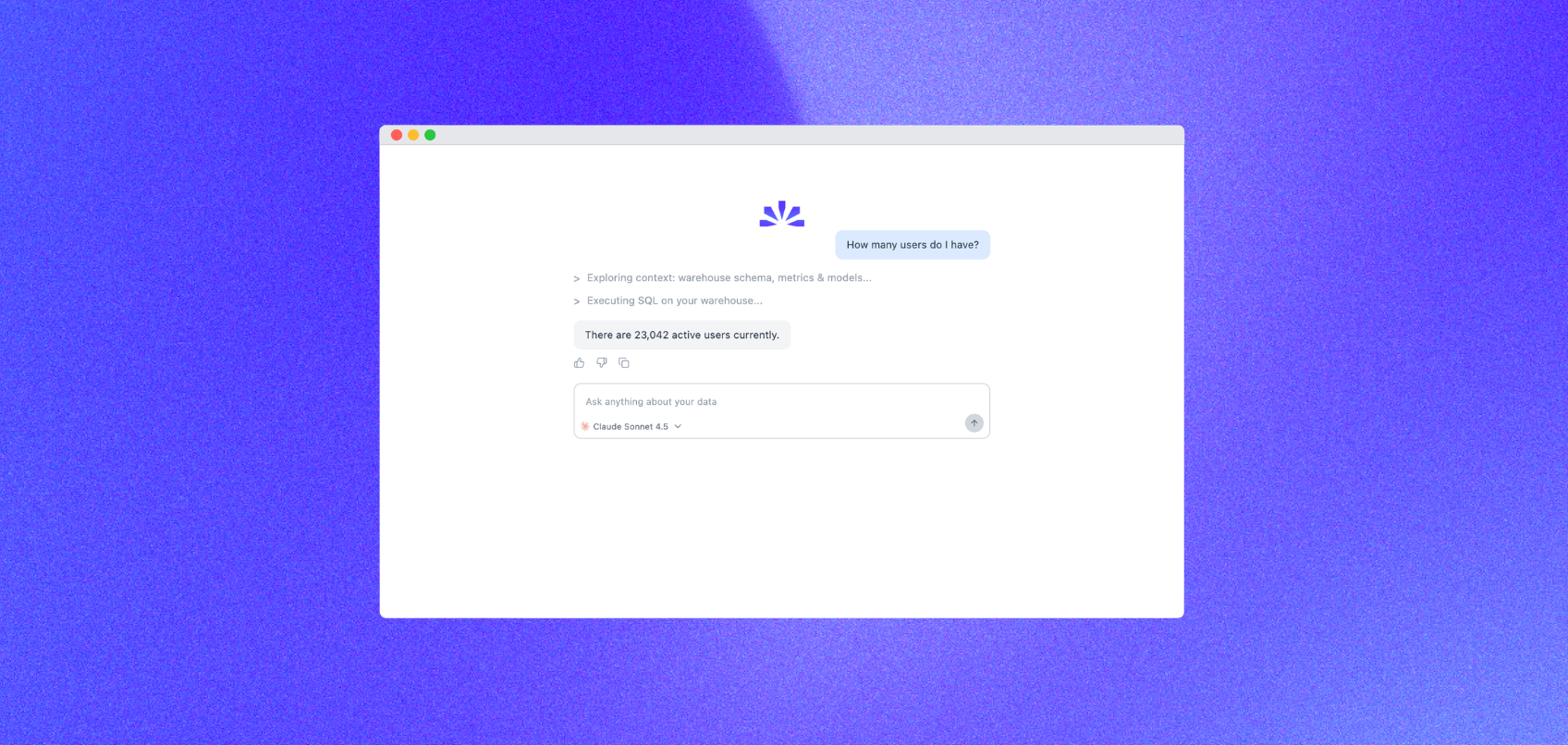

By ClaireCo-founder & CEOEveryone is building AI agents for data analytics. The demos look impressive - ask a question in natural language, get a SQL query, see results. Ship it.

Then production happens. The agent hallucinates table names. It writes queries that time out. It returns results that look right but are subtly wrong. Users lose trust after the third bad answer.

At nao, we've built production AI agents for data analytics. Here's what actually matters when moving from prototype to production.

Why Most AI Agents Fail in Production

The gap between demo and production is massive. Most teams underestimate three critical problems:

1. The Context Problem

LLMs don't understand your data warehouse. They can't see your schema, they don't know your column names, and they have no idea about your business logic. Without deep context, agents guess - and guesses fail.

Standard RAG (Retrieval Augmented Generation) helps, but it's not enough. You need more than table names in a vector database. You need:

- Schema relationships: Foreign keys, join patterns, common aggregations

- Business semantics: What "revenue" actually means in your org (is it gross? net? which currency?)

- dbt lineage: How models build on each other, dependencies, transformations

- Historical queries: What queries actually get run, how users phrase questions

2. No Evaluation Framework

You can't improve what you don't measure. Most teams deploy agents without systematic evaluation, then wonder why accuracy drops over time.

Production-grade agents need continuous evaluation on:

- Query correctness: Does the SQL actually answer the question?

- Performance: Query execution time, cost per query

- Semantic accuracy: Are results logically correct for the business question?

- Edge cases: How does it handle ambiguous requests, null values, date ranges?

3. Poor User Experience

Even when agents work technically, they often fail on UX. Users need to:

- Understand what the agent did (show the SQL, explain the logic)

- Trust the results (cite sources, show data freshness)

- Iterate quickly (refine queries, explore related questions)

- Debug when things break (clear error messages, suggested fixes)

How to Build Reliable AI Agents

Here's the systematic approach that works:

Step 1: Build Deep Context

Start with schema ingestion. Connect to your data warehouse and pull the complete schema - tables, columns, types, relationships. Don't just store names; capture metadata like column descriptions, common values, cardinality.

Add semantic layers. Document business logic in a structured way. What's the definition of "active user"? What's the difference between "orders" and "completed_orders"? This tribal knowledge is gold for agents.

Parse dbt projects. If you use dbt (and you should), your manifest.json is a treasure trove. If you haven't set up dbt yet, our step-by-step dbt setup guide walks you through creating your first models with AI assistance. It has model definitions, dependencies, tests, and documentation. Feed this into your agent's context.

Learn from usage. Capture successful queries and user feedback. When someone asks "show me MRR by region" and accepts the result, store that pattern. It becomes training data for better context retrieval.

Step 2: Implement Context Engineering

Context engineering is the practice of systematically providing the right information to your LLM at query time. It's more important than prompt engineering. We wrote a full breakdown of why this discipline needs its own open framework in Why data teams need an open framework for context engineering.

Use tiered retrieval:

- Identify relevant tables based on the question (semantic search)

- Pull detailed schema for those tables (full column definitions)

- Add related context (common joins, example queries)

- Include business logic (semantic definitions, calculations)

Keep context fresh. Schema changes, new tables get added, business logic evolves. Refresh your context daily, not weekly.

Step 3: Build an Evaluation Loop

Create a test suite of real questions with known correct answers. Run your agent against this suite on every change. For a complete 7-step evaluation methodology, see How to Evaluate an Analytics Agent.

Track metrics:

- Query success rate (did it generate valid SQL?)

- Semantic accuracy (did it answer the right question?)

- Latency (p50, p95, p99 response times)

- Cost per query (LLM API calls, compute)

Use human review. Sample random queries daily and have someone review them. Are the SQL queries optimal? Are results accurate? This catches issues before users do.

Step 4: Design for Transparency

Show your work. Always display:

- The generated SQL query (formatted, with comments)

- Which tables were used and why

- Data freshness (when was this table last updated?)

- Confidence level (is the agent certain or guessing?)

Users trust agents more when they can verify the logic. Make everything inspectable.

Step 5: Handle Errors Gracefully

When (not if) things break, fail helpfully:

- Clear error messages: "I couldn't find a table named 'customer_orders'. Did you mean 'customers_orders'?"

- Suggested fixes: "Try asking: 'Show me orders from the last 30 days'"

- Fallback options: "I can show you related data from the 'orders' table instead"

The Production Checklist

Before deploying an AI agent to production, verify:

Context Infrastructure

- Schema ingestion automated (updates daily)

- Business logic documented and indexed

- dbt lineage integrated (if applicable)

- Historical query patterns captured

Evaluation & Monitoring

- Test suite with 50+ real questions

- Automated accuracy benchmarks

- Performance monitoring (latency, cost)

- Human review process for sample queries

User Experience

- SQL queries shown to users

- Data freshness displayed

- Error messages are actionable

- Iteration workflow (refine, explore)

Reliability

- Query timeout limits set

- Rate limiting implemented

- Fallback for LLM API failures

- Audit logs for all queries

How nao Solves This

We built nao because we hit every one of these problems ourselves. Here is what we baked into the product from day one.

Context that runs deep

nao connects directly to your data warehouse and ingests your full schema automatically — tables, columns, types, relationships, and cardinality. It does not stop at names. It reads your dbt project manifest to understand model lineage, dependencies, and documentation. Business logic you have already written in dbt becomes first-class context inside the agent.

When you ask a question, nao does not search a flat vector store. It runs tiered retrieval: identify relevant models → pull full column definitions → attach join patterns and example queries → layer in metric definitions. The agent sees exactly what it needs and nothing it does not.

Context refreshes on every sync. Schema changes surface automatically — nao does not serve stale answers.

Evaluation built in, not bolted on

nao ships with a built-in evaluation layer. Every query is logged with the tables used, the SQL generated, and the execution result. You can review any interaction, mark it correct or incorrect, and that feedback feeds back into context ranking.

Accuracy metrics are visible on your workspace dashboard — not buried in logs. You see query success rate, semantic accuracy on your labeled test set, and p95 latency over time. When something regresses, you know before your users do.

Transparency users can trust

nao always shows the generated SQL, formatted and readable. Users see which tables were queried, when those tables were last refreshed, and the confidence level of the answer. There is no black box.

When the agent is uncertain, it says so. When a query fails, it explains why and suggests a corrected version. Users stop second-guessing results because they can inspect the logic at every step.

Production reliability without the boilerplate

Query timeout limits, rate limiting, and fallback handling ship out of the box. Audit logs are automatic. You do not build this infrastructure — you ship it.

Teams using nao move from prototype to production in days, not months. The context engineering, evaluation loop, and UX foundations are already there. You focus on your data and your questions.

What's Next

Building production-ready AI agents for data analytics is hard. It requires deep context engineering, systematic evaluation, and thoughtful UX design.

nao handles all of this so your team does not have to build it from scratch. Connect your warehouse, load your dbt project, and your agent is production-ready on day one. Not sure which AI analytics approach fits your stack? Read our AI data agents comparison to understand the trade-offs.

Want to see how we handle context engineering, evaluation, and production reliability? Check out our documentation or join our Slack community to discuss with other data teams building AI agents.

Claire

For nao team

Frequently Asked Questions

Related articles

product updates

We're launching the first Open Source Analytics Agent Builder

We're open sourcing nao — an analytics agent framework built on context engineering. Here's our vision for what comes after black-box BI.

Community

Agentic Analytics Meetup Paris 🇫🇷

Recap of the first meetup dedicated to agentic analytics: 4 data teams from Gorgias, Malt, GetAround, and The Working Company share what they've actually built, what worked, and what didn't.

Technical Guide

How to Do Data Modeling for AI Agents: 8 Practical Rules

A practical guide to data modeling for AI agents, with clear rules to optimize for precision, reliability, and better chat-with-data performance.