How to Do Data Modeling for AI Agents: 8 Practical Rules

A practical guide to data modeling for AI agents, with clear rules to optimize for precision, reliability, and better chat-with-data performance.

1 April 2026

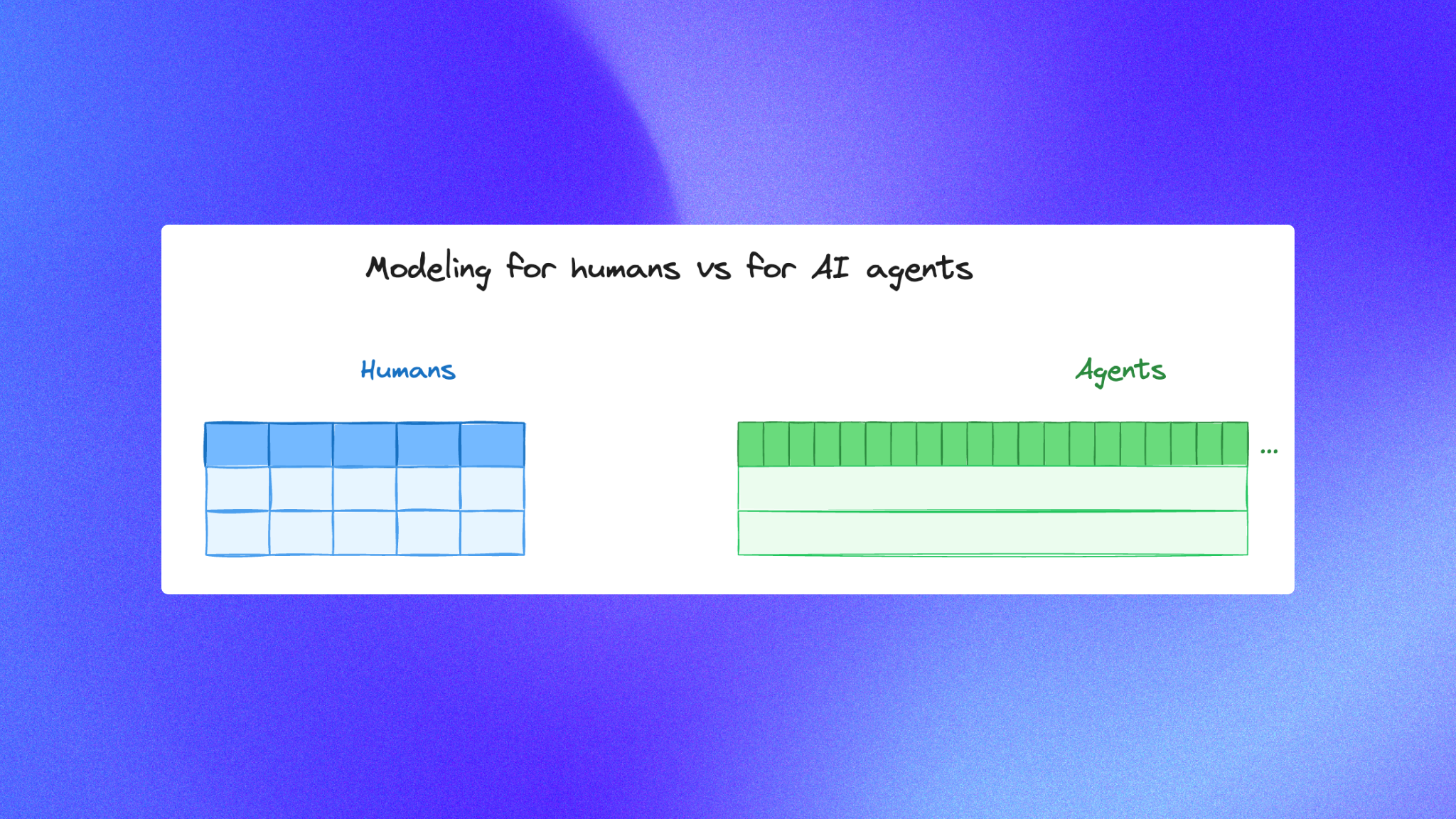

By ClaireCo-founder & CEOMost data models were designed for humans.

That made sense for BI dashboards and analyst workflows: fewer tables, fewer fields, clean naming, and high readability.

But an analytics agent is not a human analyst.

When you build for agentic analytics, the optimization target changes from readability to precision.

You can give an analytics agent a table with 100 columns and it can still pick the right one. If you only give it 10 broad columns and expect it to derive the rest, reliability drops fast.

This guide explains how to do data modeling for AI agents in a way that improves answer quality, keeps your data stack governed, and makes chat with data reliable at scale.

Why traditional modeling patterns break for AI agents

Human-first modeling usually prefers:

- fewer, wider concepts

- hidden intermediate logic

- semantic shortcuts optimized for manual exploration

Agent-first modeling needs the opposite:

- explicit fields for business meaning

- lower ambiguity at column level

- minimal derivation required at query time

Agents do best when the model says exactly what it means.

If you are also designing your broader context process, this context engineering guide is a good companion.

Rule 1: Optimize for precision, not elegance

A model that feels elegant to humans can be under-specified for an analytics agent.

For example, a single status field that mixes lifecycle, billing, and fulfillment states is readable in a dashboard, but ambiguous for an agent.

Instead, split meaning:

lifecycle_statusbilling_statusfulfillment_status

The model becomes more verbose, but far more reliable.

Rule 2: Keep derivations in the model, not in the prompt

If a business metric requires logic, define it in SQL models, not ad hoc instructions.

In practice:

- create explicit metric-ready columns in your curated layer

- avoid asking the agent to infer logic from raw columns

- document formulas in dbt descriptions so they are queryable context

This is where dbt is critical. It turns business logic into versioned, testable assets instead of hidden prompt text.

If your team is still maturing this layer, the dbt setup guide and data stack guide are useful starting points.

Rule 3: Prefer explicit columns over compressed abstractions

For human readers, abstraction can reduce cognitive load.

For an analytics agent, abstraction can remove signal.

A common mistake is collapsing many useful dimensions into one pre-aggregated table and dropping the original explanatory fields. The agent can answer fewer question variants and fails on edge cases.

As a default, keep high-value dimensions explicit unless there is a clear cost reason not to.

Rule 4: Model grain and time semantics explicitly

Agents frequently fail on time windows and entity grain mismatches.

Reduce that failure class with clear modeling patterns:

- one stable grain per fact table

- explicit timestamp semantics (

created_at,booked_at,paid_at) - explicit date helper fields (

order_date,order_week,order_month) - clear timezone policy in documentation

When grain is unclear, the agent guesses joins and aggregations. Precision modeling removes that guesswork.

Rule 5: Use naming conventions that encode intent

Naming is a modeling interface for the model.

Choose names that reduce ambiguity:

is_refundedinstead ofrefund_flagnet_revenue_usdinstead ofrevenuecustomer_first_paid_atinstead ofstart_date

These naming decisions compound across your analytics agent performance because they make SQL generation more deterministic.

Rule 6: Add “decision columns,” not only reporting columns

Human BI often emphasizes reporting outputs.

Analytics agents need decision-friendly context fields that explain why a number changes:

- lifecycle transition columns

- attribution flags

- quality status fields

- source-system provenance columns

These fields increase column count, but they reduce reasoning errors and improve traceability.

Rule 7: Model for join reliability first

Many wrong answers come from wrong joins, not wrong arithmetic.

Your agent-ready model should make joins hard to misuse:

- consistent primary key naming

- explicit foreign keys in curated models

- bridge tables where many-to-many is expected

- docstrings that call out safe join paths

Treat join clarity as a product requirement for chat with data.

For teams evaluating reliability systematically, this analytics agent evaluation walkthrough shows how to test failures by question type.

Rule 8: Test the model with real agent questions

Do not stop at SQL correctness tests. Add question-driven evaluation:

- pick 30 to 50 real stakeholder prompts

- define expected result tables

- run the same set after every model or context change

This closes the loop between data modeling and agent behavior, which is the core of agentic analytics operations.

A simple architecture that works

For most teams, a robust baseline is:

- raw and staging models for ingestion cleanup

- curated fact and dimension models with explicit semantics

- dbt documentation and tests as context inputs

- evaluation harness tied to business questions

- open source tooling where transparency and control matter

This approach keeps your analytics agent grounded in precise structure instead of prompt improvisation.

Final takeaway

With humans, we often simplify models for readability.

With AI agents, you should simplify ambiguity, not structure.

More explicit fields, clearer grain, and better documented semantics usually beat minimalist modeling every time.

If your goal is trustworthy chat with data, precision-first data modeling is not optional. It is the foundation.

Claire

For nao team

Related articles

product updates

We're launching the first Open Source Analytics Agent Builder

We're open sourcing nao — an analytics agent framework built on context engineering. Here's our vision for what comes after black-box BI.

product updates

Launching nao automations

Schedule your nao analytics agents to run in the background. Recurring reports, conditional alerts, and a new Feed to track all agent activity.

Buyer's Guide

LangChain vs Wren AI vs nao: which open-source tool for agentic analytics?

Three open-source projects for agentic analytics, tested on the same BigQuery question. Setup, accuracy, context approach, and which to pick.